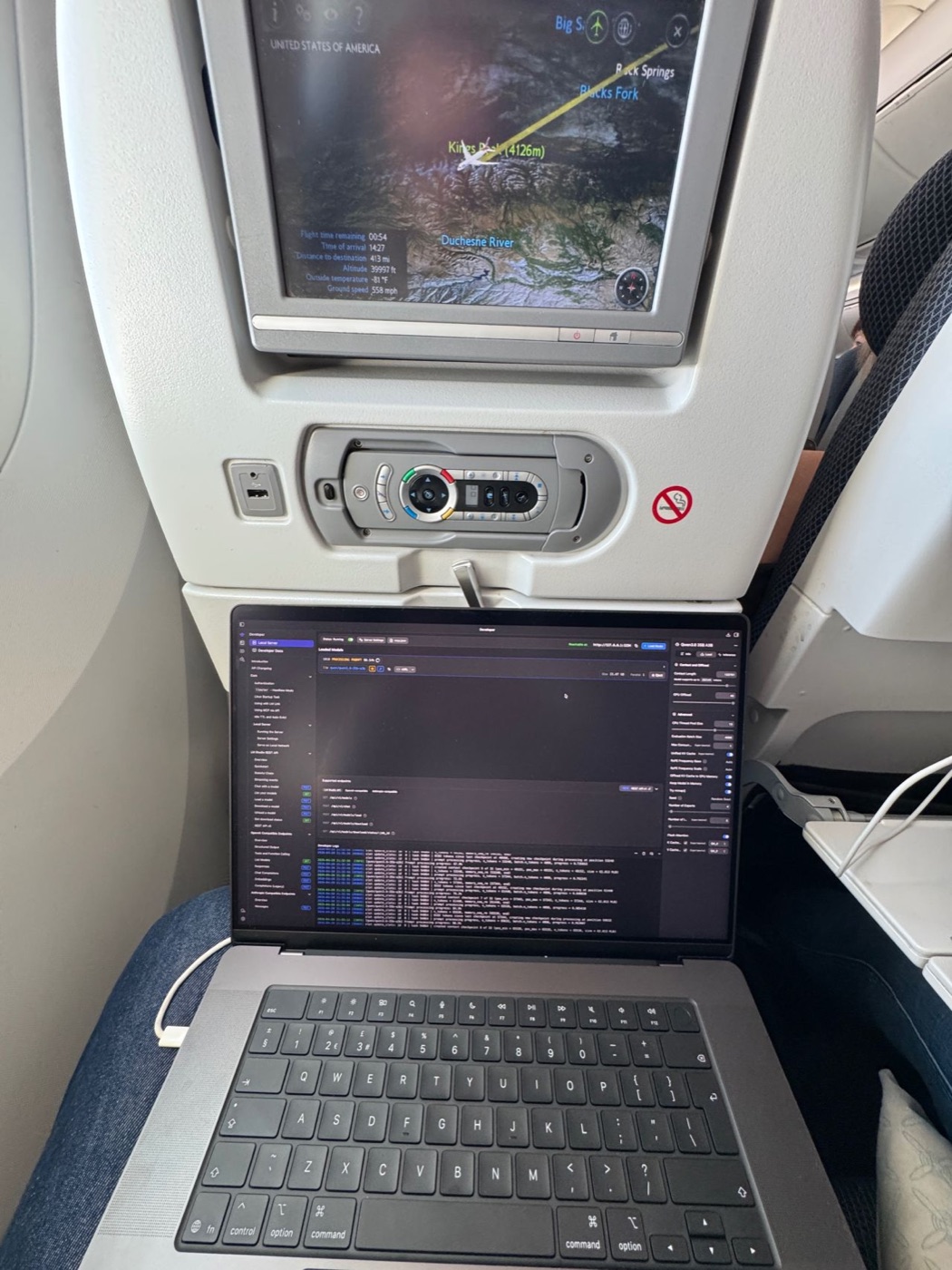

Running Local LLMs Offline on a Ten-Hour Flight

I flew from London to Google Cloud Next 2026 in Las Vegas. Ten hours with no in-flight wifi. I used the time to test how far a modern MacBook can carry engineering work on local LLMs alone.

Setup

A week old MacBook Pro M5 Max, 128GB unified memory, 40-core GPU.

Gemma 4 31B and Qwen 4.6 36B via LM Studio.

Top 100 most common docker images, top programming languages alongside with enough dependencies to build function sites with rich visualisations.

Countless CLIs - with opencode, rtk, instantgrep and duckdb being most used.

What I built

A billing analytics tool covering two years of loveholidays cloud spend. DuckDB underneath, with a custom UI for slicing the data along dimensions the standard dashboards don’t expose. It surfaced patterns and cross-service correlations that had been hard to uncover.

I was interested in exploring this area for a while, but I could never prioritise it against whirlwind of my other responsibilities. With 10 hours to spare, top of the range hardware and OSS model I decided to give it a go.

Alongside that, I processed roughly 4M tokens on smaller tasks: refactors, CLI scaffolding, documentation. For tight-scope work, Gemma and Qwen produced output comparable to the frontier models I normally use.

What broke

Three limits showed up.

Power. Roughly 1% of battery per minute under sustained load. Battery draining even when plugged in with 60w of power.

Heat. At 70–80W sustained, the chassis runs hot enough to be uncomfortable. The in-flight blanket and pillow saved my knees, but made the overheating problem even worse.

Context. Throughput and latency degrade noticeably past 100k tokens.

Loops. A handful of prompts sent the model into an infinite loop that needed manual intervention to break. Unclear whether the fault sat at the opencode orchestration layer or the model itself.

What helped: one problem per session, long plans written to markdown for re-ingestion, and minimising tool-call overhead with rtk. I avoid compaction - it is very slow to run.

Instrumentation

I’ve built two tools over the flight.

powermonitor - a CLI that reads Mac power telemetry (CPU, GPU, ANE, adapter, battery) live. I’ve since pushed a fix for faster detection of adapter power-source changes.

⚡ Total: 81.6 W

Charging (split updates when battery % changes)

CPU: 4.5 W GPU: 77.2 W ANE: 0.0 W

Adapter: 60 W

Battery: 14% Source: AC

Power (W)

▁▂▃▃▄▃▄▄▅▄▅▅▅▄▅▄▅▅▅▄▅▆▅▄▅▅▆▅▄▄▅▄▅▅

Min: 47.5 Avg: 71.5 Max: 87.3 W

14144 samples

lmstats - reads LM Studio telemetry and reports token throughput, latency distributions, and context-window behaviour across a session.

Both follow the same pattern we apply at loveholidays at a larger scale: instrument the system before acting on it.

Community responses

The LinkedIn post attracted several threads worth engaging with.

Steve Turner noted that running local, where cost is physically visible, made him more critical of what he asks of cloud models. This is the mechanical sympathy principle applied to AI - direct exposure to heat, power, and context effects builds intuition about where inference is cheap and where it’s expensive. That intuition transfers back to cloud usage.

Jackson Oaks made the case for Apple Silicon perf-per-watt over NVIDIA for battery-constrained workloads.

The cable

British Airways advertises 70W per seat. Powermonitor showed 60W delivered on the outbound flight. I decided to get to the bottom of this discrepancy upon arrival.

In the hotel I tested the M5 Max under equivalent load with two cables. Same adapter, same socket, same workload.

iPhone cable: 60W delivered

MacBook cable: 94W delivered

A 34W gap (36%) from cable selection alone. On the outbound flight I was throttling myself to 60W against a 70W ceiling.

Return flight will test this with the correct cable. I expect at least 16% improvement against the 70W cap, more headroom once the cable isn’t the limit.

Without instrumentation I wouldn’t discover my mistake of using an iPhone cable.

Takeaways

Local inference is viable for a meaningful subset of engineering work: tight-scope coding, exploratory tooling, and tasks that don’t clear the cost-benefit bar with cloud inference. Large-context reasoning, agentic workflows needing frontier intelligence, and high-value tasks still belong in the cloud.

The secondary effect: local exposure to inference cost forces discipline around prompt size, tool-call overhead, and context management. That discipline carries back to cloud usage.

What’s next

Return flight with the correct cable. Publishing the numbers.

Explore Neural Engine powered small LLM models for their usefulness, speed and power consumption as it is supposed to be very efficient to run.